Auf Twitter wird auch zu TV-Shows fleißig gepostet und diskutiert. Im Folgenden will ich diesen Umstand nutzen, um zu schauen, wie die einzelnen Charaktere der Show "Haus des Geldes" (Money Heist auf englisch) beim Publikum ankommen. Die Show hat mich ja schon einmal zu einem Artikel bewogen; mal schauen, was wir nun lernen.

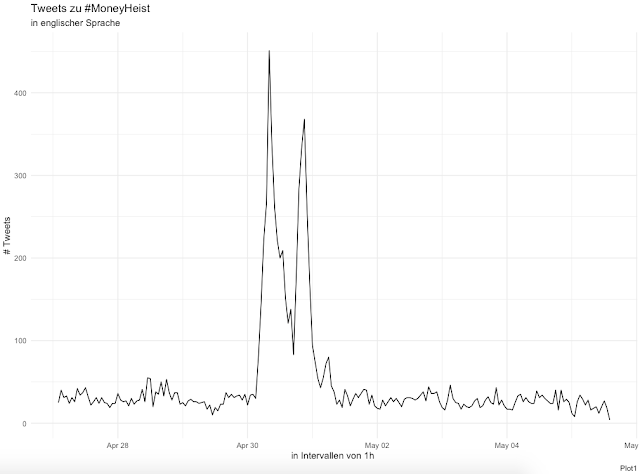

Ein Blick auf die Anzahl der Tweets zeigt, dass die Show tatsächlich ein heißes Thema war. Die vierte Staffel kam am 3. April 2020 raus und es folgten zwei große Wellen.

Unter den häufigsten Begriffen sehen wir schon einzelne Charaktere:

Und auch beim Netzwerk der Bigramme können wir z.B. die Kombination "hate-tokyo" ausmachen. Dies muss noch nicht repräsentativ sein, gibt aber schon einmal erste Hinweise. Auch "bella-ciao" können wir ausmachen; leider auch andere Kombinationen von Tweets die den untersuchten Hashtag für andere Themen benutzt haben.

Also ran an die Analyse zu den einzelnen Charakteren. Wir können plotten, wie oft diese jeweils genannt wurden:

Und wir können plotten wie positiv bzw. negativ diese jeweils beim twitternden Publikum ankommen:

Hier müssen wir allerdings beachten, dass manche Charakter relativ selten erwähnt wurden. Wir beschränkten uns deswegen auf jene mit mind. 30 Tweets um ein allgemeineres Bild zu erhalten:

Dass Gandia negativ betrachtet wird überrascht nicht; wohl aber der negative Wert der anderen Hauptcharaktere. Vielleicht liegt es aber daran, dass ein Tweet eher über einen Fehltritt eines Charakters verfasst wird, als über dessen Charakter. Und von Fehltritten und Handlungswendungen lebt diese Serie.

Genauso können wir untersuchen, mit welchen Emotionen die Charaktere assoziiert werden:

Zugegebenermaßen ist dieser Plot ein bisschen unübersichtlich; auch wenn wir schon einzelne Ausreißer identifizieren können. Im Folgenden deswegen die einzelnen Profile - wieder ab jeweils 30 Tweets. Hier können wir sehen, dass sich v.a. Gandia und der Professor von den anderen Charakteren abheben.

Notiz: Diese Charakterisierungen wurden mithilfe des NRC-Lexikons angefertigt. Ich habe mich zudem stark an dieser Anleitung orientiert. Danke!

Und hier noch der Code in RStudio

library(tidyverse)

library(knitr)

library(tidytext)

library(rtweet)

library(igraph)

library(ggraph)

# Twitterdaten laden

tweets <- search_tweets(q = "#MoneyHeist", n = 18000, include_rts = FALSE, lang = "en")

# Plot 1 - zeitl. Verlauf der Tweets

ts_plot(tweets, by = "1 hours") +

theme_minimal()+

scale_y_continuous(name = "# Tweets") +

labs(

x = "in Intervallen von 1h ",

title = paste("Tweets zu #MoneyHeist"),

subtitle = ("in englischer Sprache"),

caption = "Plot1")

# Data Prep

custom_stop_words <- bind_rows(tibble(word = c("season", "tco", "netflix", "lacasadepapel", "money", "heist", "watch", "watching", "ukaidiwamagufuli", "tco", "gt"),

lexicon = c("custom")),

stop_words)

dat <- tweets %>%

mutate(tweet_number = row_number())%>%

select(tweet_number, text, created_at, source, followers_count, country)%>%

as_tibble() %>%

mutate(text = str_replace_all(text, "[^\x01-\x7F]", ""),

text = str_replace_all(text, "#MoneyHeist", ""),

text = str_replace_all(text, "#moneyheist", ""),

text = str_replace_all(text, "\\.|[[:digit:]]+", ""),

text = str_replace_all(text, "https|amp|t.co", ""))

dat_token <- dat %>%

unnest_tokens(word, text) %>%

anti_join(custom_stop_words, by = "word")

# Plot 2 - die häufigsten Begriffe

dat_token%>%

na.omit()%>%

count(word, sort = TRUE) %>%

head(20) %>%

mutate(word = reorder(word, n)) %>%

ggplot(aes(x = word, y = n, fill = word))+

geom_col(show.legend = FALSE) +

coord_flip() +

scale_fill_viridis(discrete = TRUE, option="cividis") +

labs(

title = paste("häufigste Begriffe in Tweets zu #MoneyHeist"),

subtitle = ("in englischer Sprache"),

caption = "Plot2")

# Plot 3 - Netzwerk der Bigramme

dat_bigram <- dat %>%

unnest_tokens(bigram, text, token = "ngrams", n = 2) %>%

separate(bigram, c("word1", "word2"), sep = " ") %>%

filter(!word1 %in% custom_stop_words$word,

!word2 %in% custom_stop_words$word) %>%

drop_na(word1) %>%

drop_na(word2) %>%

unite(bigram, word1, word2, sep = " ")

bigrams_separated <- dat_bigram %>%

separate(bigram, c("word1", "word2"), sep = " ") %>%

count(word1, word2, sort = TRUE) %>%

filter(n > 20) %>%

graph_from_data_frame()

ggraph(bigrams_separated, layout = "fr") +

geom_edge_link() +

geom_node_point() +

geom_node_text(aes(label = name), vjust = 1, hjust = 1, repel=TRUE)+

theme_void() +

labs(

title = paste("Beziehung der Bigramme in Tweets zu #MoneyHeist"),

subtitle = ("in englischer Sprache"),

caption = "Plot3")

# Plot 4 - Häufigkeit der Charakter-Nennungen

characters <- c("tokyo", "professor", "lisbon", "berlin", "moscow", "nairobi", "rio", "denver", "stockholm", "helsinki", "palermo", "manila", "gandia")

dat_token %>%

filter(word %in% characters)%>%

count(word, sort = TRUE) %>%

mutate(word = reorder(word, n)) %>%

ggplot(aes(x = word, y = n, fill = word)) +

geom_col(show.legend = FALSE) +

coord_flip()+

scale_y_continuous(name = "Anzahl an Nennungen")+

scale_x_discrete(name = "Charakter")+

scale_fill_viridis(discrete = TRUE)+

labs(

title = paste("Häufigkeit der Charaktere in Tweets zu #MoneyHeist"),

subtitle = ("in englischer Sprache"),

caption = "Plot4")

# Sentiment-Analyse

dat_mentions <- dat_token %>%

group_by(tweet_number) %>%

summarise(text = str_c(word, collapse = " "))%>%

mutate(tokyo = case_when(str_detect(text, ".tokyo") ~ TRUE, TRUE ~ FALSE),

professor = case_when(str_detect(text, ".professor") ~ TRUE, TRUE ~ FALSE),

lisbon = case_when(str_detect(text, ".lisbon") ~ TRUE, TRUE ~ FALSE),

berlin = case_when(str_detect(text, ".berlin") ~ TRUE, TRUE ~ FALSE),

moscow = case_when(str_detect(text, ".moscow") ~ TRUE, TRUE ~ FALSE),

nairobi = case_when(str_detect(text, ".nairobi") ~ TRUE, TRUE ~ FALSE),

rio = case_when(str_detect(text, ".rio") ~ TRUE, TRUE ~ FALSE),

denver = case_when(str_detect(text, ".denver") ~ TRUE, TRUE ~ FALSE),

stockholm = case_when(str_detect(text, ".stockholm") ~ TRUE, TRUE ~ FALSE),

helsinki = case_when(str_detect(text, ".helsinki") ~ TRUE, TRUE ~ FALSE),

palermo = case_when(str_detect(text, ".palermo") ~ TRUE, TRUE ~ FALSE),

manila = case_when(str_detect(text, ".manila") ~ TRUE, TRUE ~ FALSE),

gandia = case_when(str_detect(text, ".gandia") ~ TRUE, TRUE ~ FALSE))%>%

unnest_tokens(word, text) %>%

anti_join(stop_words, by = "word")

tokyo <- dat_mentions %>%

filter(tokyo)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "tokyo")

professor <- dat_mentions %>%

filter(professor)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "professor")

lisbon <- dat_mentions %>%

filter(lisbon)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "lisbon")

berlin <- dat_mentions %>%

filter(berlin)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "berlin")

moscow <- dat_mentions %>%

filter(moscow)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "moscow")

nairobi <- dat_mentions %>%

filter(nairobi)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "nairobi")

rio <- dat_mentions %>%

filter(rio)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "rio")

denver <- dat_mentions %>%

filter(denver)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "denver")

stockholm <- dat_mentions %>%

filter(stockholm)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "stockholm")

helsinki <- dat_mentions %>%

filter(helsinki)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "helsinki")

palermo <- dat_mentions %>%

filter(palermo)%>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0))%>%

mutate(sentiment = positive - negative)%>%

mutate(character = "palermo")

manila <- dat_mentions %>%

filter(manila) %>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0)) %>%

mutate(sentiment = positive - negative) %>%

mutate(character = "manila")

gandia <- dat_mentions %>%

filter(gandia) %>%

inner_join(get_sentiments("bing"))%>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

rowwise() %>%

mutate(positive = ifelse("positive" %in% names(.), positive, 0)) %>%

mutate(negative = ifelse("negative" %in% names(.), negative, 0)) %>%

mutate(sentiment = positive - negative) %>%

mutate(character = "gandia")

dat_sentiment <- bind_rows(tokyo, professor, lisbon, berlin, moscow, nairobi, rio, denver, stockholm, helsinki, palermo, manila, gandia) %>%

group_by(character) %>%

mutate("tweetnumber" = 1) %>%

summarise(positive = sum(positive),

negative = sum(negative),

overall = sum(sentiment),

n = sum(tweetnumber)) %>%

gather(positive:overall, key = type, value = score) %>%

mutate(type = factor(type, levels = c("positive", "negative", "overall")))%>%

spread(type, score)%>%

group_by(character) %>%

mutate(total = positive + negative) %>%

mutate(positive_percent = positive/total*100,

negative_percent = negative/total*100,

sentiment = positive_percent - negative_percent) %>% # Sentiment-Score als Prozent, da unterschiedliche Anzahl an Nennungen

select(n, character, positive_percent, negative_percent, sentiment)%>%

arrange(desc(sentiment))

# Plot 5 - Sentimentscore der Charaktere

dat_sentiment %>%

ggplot(aes(x = reorder(character,sentiment), y = sentiment, fill= sentiment)) +

geom_col(show.legend = FALSE) +

scale_fill_viridis(option="cividis") +

scale_y_continuous(name = "Sentimentscore (%)")+

scale_x_discrete(name = "Charaktere") +

theme(axis.text.x = element_text(angle = 45, hjust = 1)) +

labs(

title = paste("Sentimentscore der Charaktere"),

subtitle = ("in englischen Tweets zu #MoneyHeist"),

caption = "Plot5")

# Plot 6 - Sentimentscore der Charaktere ab 30 Tweets

dat_sentiment %>%

filter(n > 30) %>%

ggplot(aes(x = reorder(character,sentiment), y = sentiment, fill= sentiment)) +

geom_col(show.legend = FALSE) +

scale_fill_viridis(option="cividis") +

scale_y_continuous(name = "Sentimentscore (%)")+

scale_x_discrete(name = "Charaktere") +

theme(axis.text.x = element_text(angle = 45, hjust = 1))+

labs(

title = paste("Sentimentscore der Charaktere"),

subtitle = ("ab 30 Tweets zu #MoneyHeist"),

caption = "Plot6")

# Emotions per Charakter

tokyo_emotions <- dat_mentions %>%

filter(tokyo)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "tokyo")

professor_emotions <- dat_mentions %>%

filter(professor)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "professor")

lisbon_emotions <- dat_mentions %>%

filter(lisbon)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "lisbon")

berlin_emotions <- dat_mentions %>%

filter(berlin)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "berlin")

moscow_emotions <- dat_mentions %>%

filter(moscow)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "moscow")

nairobi_emotions <- dat_mentions %>%

filter(nairobi)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "nairobi")

rio_emotions <- dat_mentions %>%

filter(rio)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "rio")

denver_emotions <- dat_mentions %>%

filter(denver)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "denver")

stockholm_emotions <- dat_mentions %>%

filter(stockholm)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "stockholm")

helsinki_emotions <- dat_mentions %>%

filter(helsinki)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "helsinki")

palermo_emotions <- dat_mentions %>%

filter(palermo)%>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "palermo")

manila_emotions <- dat_mentions %>%

filter(manila) %>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "manila")

gandia_emotions <- dat_mentions %>%

filter(gandia) %>%

inner_join(get_sentiments("nrc"), by="word") %>%

count(tweet_number = tweet_number, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(character = "gandia")

dat_emotions <- bind_rows(tokyo_emotions, professor_emotions, lisbon_emotions, berlin_emotions, moscow_emotions, nairobi_emotions, rio_emotions, denver_emotions, stockholm_emotions, helsinki_emotions, palermo_emotions, manila_emotions, gandia_emotions) %>%

group_by(character) %>%

mutate("tweetnumber" = 1) %>%

summarise(anger = sum(anger),

anticipation = sum(anticipation),

disgust = sum(disgust),

fear = sum(fear),

joy = sum(joy),

negative = sum(negative),

positive = sum(positive),

sadness = sum(sadness),

surprise = sum(surprise),

trust = sum(trust),

n = sum(tweetnumber)) %>%

gather(anger:trust, key = type, value = score) %>%

mutate(type = factor(type, levels = c("anger", "anticipation","disgust","fear","joy","negative","positive","sadness","surprise","trust")))%>%

spread(type, score) %>%

group_by(character) %>%

mutate(total = anger + anticipation + disgust + fear + joy + negative + positive + sadness + surprise + trust) %>%

mutate(anger_percent = anger/total*100,

anticipation_percent = anticipation/total*100,

disgust_percent = disgust/total*100,

fear_percent = fear/total*100,

joy_percent = joy/total*100,

negative_percent = negative/total*100,

positive_percent = positive/total*100,

sadness_percent = sadness/total*100,

surprise_percent = surprise/total*100,

trust_percent = trust/total*100) %>% # Sentiment-Score als Prozent, da unterschiedliche Anzahl an Nennungen

select(n, character, anger_percent, anticipation_percent, disgust_percent, fear_percent, joy_percent, negative_percent, positive_percent, sadness_percent, surprise_percent, trust_percent)

# Plot 7 - Emotionen der Charaktere

dat_emotions %>%

filter(n > 30) %>%

select(anger_percent, anticipation_percent, disgust_percent, fear_percent, joy_percent, negative_percent, positive_percent, sadness_percent, surprise_percent, trust_percent, character) %>%

`colnames<-`(c("anger","anticipation", "disgust","fear","joy","negative","positive","sadness","surprise","trust","character")) %>%

gather(key = "variable", value = "value", -character) %>%

mutate_all(~replace(., is.na(.), 0)) %>%

ggplot(aes(x = variable, y = value, fill=character, group= character)) +

geom_line(aes(color = character), alpha=0.7 , size=1) +

geom_point(aes(color=character), size=1.5) +

scale_color_viridis(discrete=TRUE, option="cividis") +

theme(axis.text.x = element_text(angle = 45, hjust = 1)) +

labs(

x="Emotionen", y=NULL,

title = paste("Emotionen der Charaktere"),

subtitle = ("in englischen Tweets zu #MoneyHeist"),

caption = "Plot7")

dat_emotions %>%

filter(n > 30) %>%

select(anger_percent, anticipation_percent, disgust_percent, fear_percent, joy_percent, negative_percent, positive_percent, sadness_percent, surprise_percent, trust_percent, character) %>%

`colnames<-`(c("anger","anticipation", "disgust","fear","joy","negative","positive","sadness","surprise","trust","character")) %>%

gather(key = "variable", value = "value", -character) %>%

mutate_all(~replace(., is.na(.), 0)) %>%

ggplot(aes(x = variable, y = value)) +

geom_polygon(aes(group = character, color=character, fill=character), size = 1) +

coord_polar(clip = "off")+

scale_fill_viridis(discrete=TRUE, option="cividis") +

scale_color_viridis(discrete=TRUE, option="cividis") +

facet_wrap(~character)+

theme(legend.position = "none") +

labs(

x="Emotionen", y=NULL,

title = paste("mit den Charakteren assoziierte Emotionen"),

subtitle = ("in englischen Tweets zu #MoneyHeist"),

caption = "Plot8")